vrraj-bm25s-retriever

A lightweight BM25S-powered lexical retrieval package for Python applications, REST services, LLM systems, and MCP-based tool workflows.

Use it to route LLM tool calls, filter MCP-discovered tools, search documents, and build fast lexical retrieval layers without running a vector database.

The ingestion model is intentionally flexible: combine your own YAML tool registry, MCP-discovered tools, and documents or tool definitions injected through the REST API into one in-memory BM25S index. The retriever can then be tuned to return the most relevant tool or tool set before anything is passed to the LLM.

Why this exists

Tool-heavy agentic systems can quickly run into context bloat. As tool registries grow, passing every tool definition, description, and parameter schema into the LLM increases token usage, adds latency, and can make tool selection less reliable.

vrraj-bm25s-retriever acts as a small deterministic relevance layer before prompt assembly. It is designed for applications where many tools are available, but only a small subset is relevant for any given request.

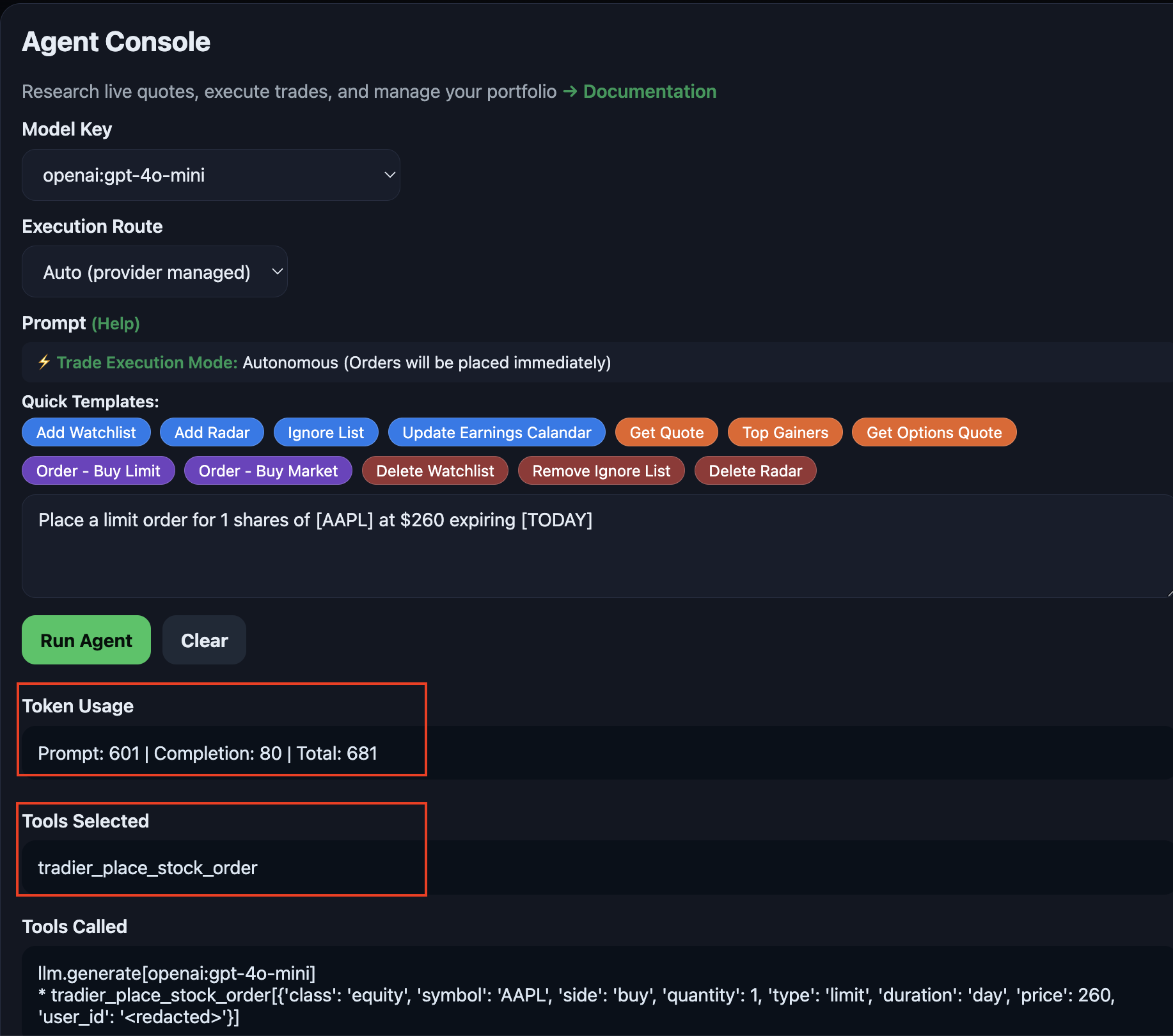

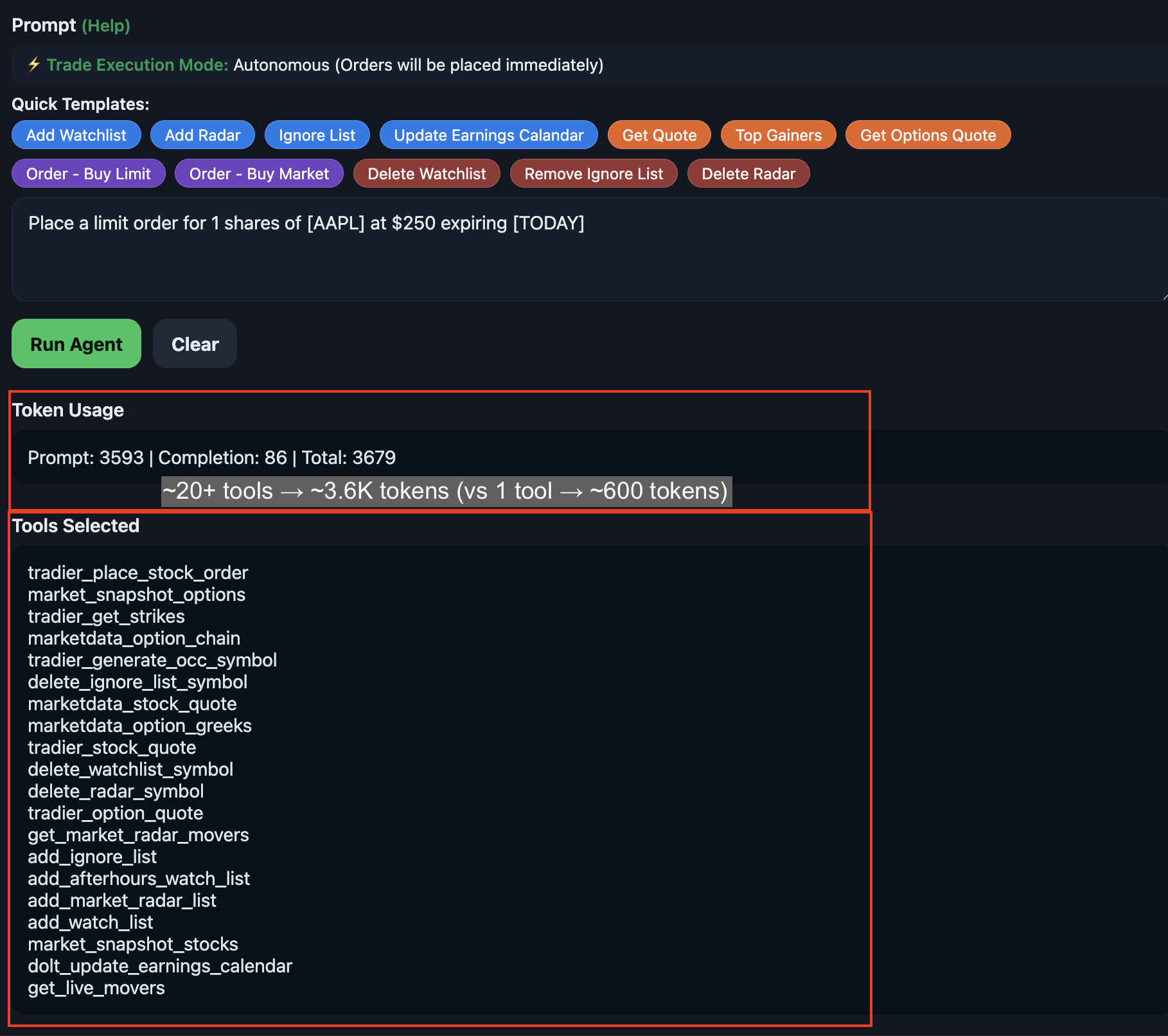

Example from a trading agent built with this routing layer:

With lexical routing (~600 tokens, 1 tool)

Without routing (~3.6K tokens, 20+ tools)

Primary use case: LLM and MCP tool routing

Modern agentic systems increasingly discover tools through Model Context Protocol (MCP), internal registries, and service APIs. MCP standardizes tool discovery, but it does not decide which tools should be passed to the LLM for a specific user request.

That selection step still belongs in the MCP client, host application, or orchestrator.

Discover / Load → Inject → Index → Filter → Focused LLM Context

In practice:

YAML Tool Registry + MCP-Discovered Tools + Internal Tool Definitions

→ Inject into BM25S Index (REST or in-process)

→ Query-Time Tool Filtering

→ Focused LLM Context

Tools can come from YAML, MCP discovery, or internal registries. The client or orchestration layer maps them into BM25S documents and injects them into a unified in-memory index. At query time, BM25S filters the relevant subset before the LLM sees the tool list.

Flexible ingestion architecture

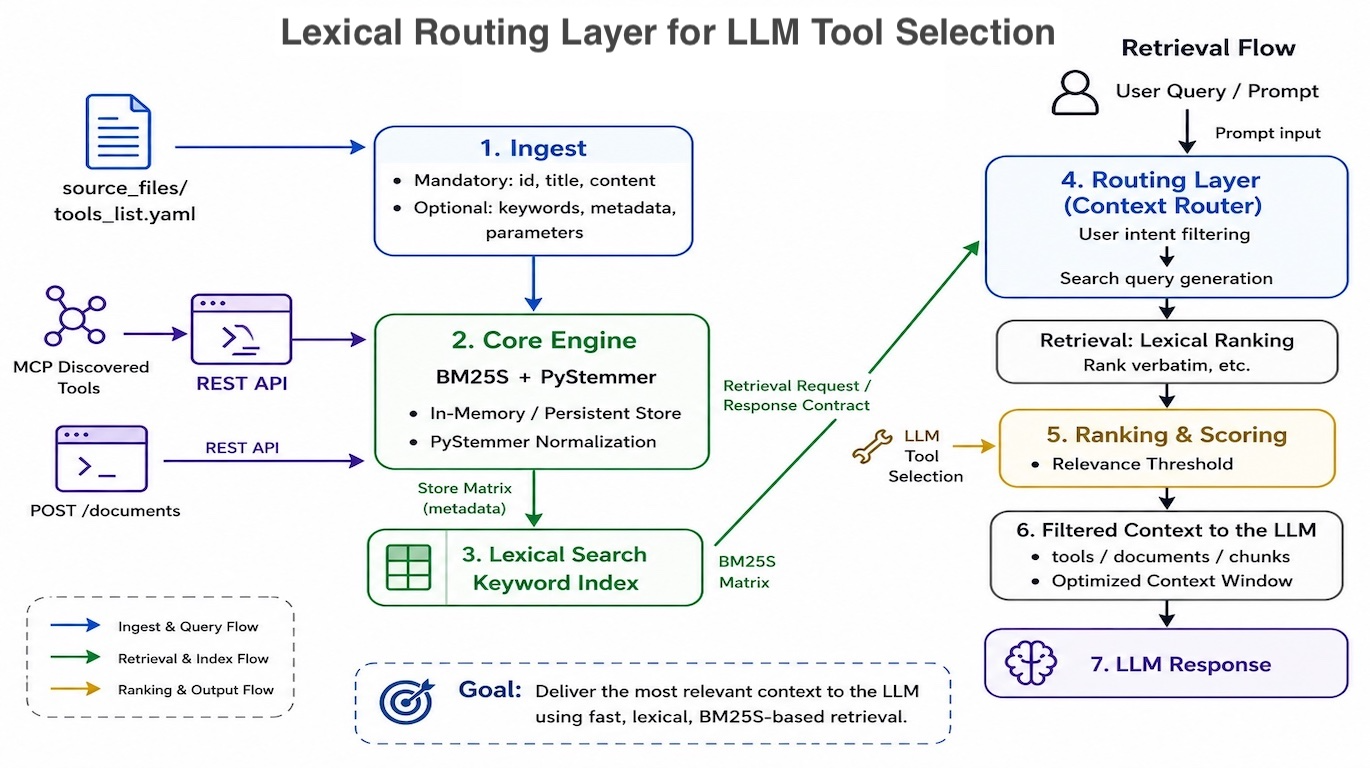

Architecture overview:

The retriever is not limited to one source of truth. A single BM25S index can consolidate:

- Your own YAML-based tool or document registry

- MCP-discovered tools mapped by the client or orchestrator

- Internal tool definitions from application code or service registries

- Documents or tool definitions injected dynamically through the REST API

This allows static definitions and runtime-discovered tools to participate in the same lexical ranking flow. You can tune temperature, cutoff thresholds, keywords, and tool descriptions to control whether the router returns one highly specific tool or a small candidate set for the LLM.

What you get

- Python retrieval library for programmatic lexical search and tool routing

- YAML-backed document/tool registry support for static tool definitions and document collections

- Runtime document/tool injection for MCP-discovered tools, internal registries, and API-supplied context

- REST service for remote retrieval, dynamic in-memory indexing, and document/tool management

- HTTP client for connecting applications to the BM25S REST service, including remote deployments and service-oriented architectures

- BM25S + PyStemmer for fast stemming-aware lexical matching

- Softmax relevance scoring with configurable temperature and cutoff filtering

- Normalized response schema with scores, rankings, metadata, and settings

- Demo Web UI for testing retrieval behavior, tuning parameters, and refining tool descriptions

Install

pip install vrraj-bm25s-retriever

For the REST service extras:

pip install "vrraj-bm25s-retriever[server]"

Quick example

from bm25s_retriever import BM25SRetriever, Document

retriever = BM25SRetriever()

retriever.add_documents([

Document(

id="create_order",

title="Create Order",

content="Place a buy or sell order for a stock or equity trade.",

keywords=["place order", "buy order", "sell order", "stock trade"],

metadata={"category": "trading", "type": "tool"},

),

Document(

id="get_market_movers",

title="Get Market Movers",

content="Retrieve top gaining, losing, or most active market movers.",

keywords=["market movers", "top gainers", "top losers", "most active"],

metadata={"category": "trading", "type": "tool"},

),

])

results = retriever.retrieve_documents(

query="place a limit buy order",

temperature=0.5,

ignore_zero=True,

llm_tools_cutoff=10.0,

)

for doc in results["documents"]:

print(doc["id"], doc["title"], doc["score_percentage"])

Interactive tuning UI

The GitHub repo includes a FastAPI-powered Demo Web UI for testing retrieval behavior, inspecting ranked results, adding documents, and tuning search parameters.

Use it as a local experimentation environment: load your own YAML documents or tool definitions, inject additional documents or tools through the API, test queries, adjust temperature and cutoff settings, refine keywords and tool descriptions, and see exactly how results are ranked before using the settings in production.

See setup instructions in the README: Demo Web UI

Summary

vrraj-bm25s-retriever is a lightweight BM25S-based retrieval layer designed to solve Agentic Tool Confusion. By implementing Surgical Context Filtering, it narrows user intent against a bounded set of domain-specific tools before prompt assembly. This ensures high LLM Tool Selection Precision and results in Reduced Token Usage in Agents. It acts as the orchestration layer for Deterministic Tool Routing, unifying static YAML registries and dynamic MCP-discovered tools into a single, optimized search surface